What Is the Arm AGI CPU and Why It Matters for AI Data Centers?

After 35 years of licensing chip designs to other companies, Arm has made a bold leap into selling its own finished processors.

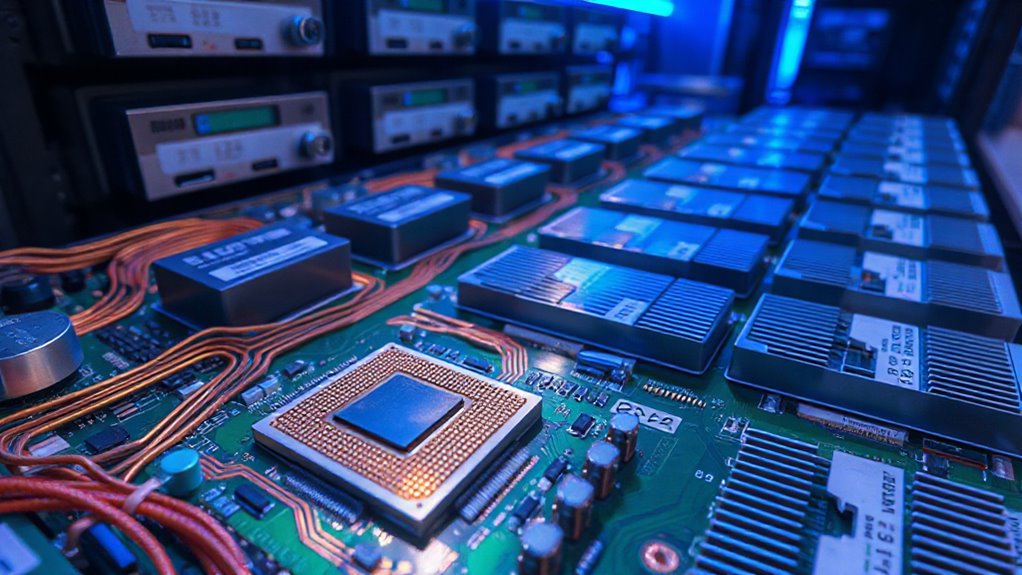

The new Arm AGI CPU, built with Meta’s help, packs up to 136 cores into a single chip using advanced 3-nanometer manufacturing. It’s designed specifically for agentic AI systems, where CPUs coordinate powerful accelerators and manage data flow across massive server farms.

With lightning-fast memory connections and support for the latest AI math instructions, this processor targets a growing problem: today’s AI data centers need smarter CPUs to keep expensive AI accelerators fed with data and working efficiently.

Blockchain-based trading systems can benefit from such hardware by enabling faster settlement times and more efficient coordination between ledgers and AI-driven analytics.

Why Meta and Arm Co-Developed a New Data Center Processor

Behind every great AI system lies a less glamorous truth: the smartest neural networks in the world still need traditional CPUs to shuffle data, coordinate tasks, and keep the whole operation running smoothly.

Meta faced a growing problem as existing processors struggled under the weight of training personal superintelligence systems. Traditional chips simply couldn’t handle the workload efficiently in gigawatt-scale data centers.

The company needed custom silicon that could deliver massive compute power without overheating or draining enormous amounts of electricity. Arm’s expertise in power-efficient design made them the perfect partner to build processors specifically designed for AI’s demanding requirements.

Central banks, acting like an economic thermostat, can influence data center costs through changes in interest rates and broader monetary policy, which affects capital costs and investment decisions.

Arm AGI CPU Technical Specifications: 136 Cores and 45,000 Per Rack

Meta and Arm packed 136 Neoverse V3 cores into each processor, creating what might be the most densely populated CPU designed specifically for AI workloads.

Each core gets 2 MB of personal cache space, plus access to a shared 128 MB system cache.

The chip runs at 3.2 GHz constantly, boosting to 3.7 GHz when needed.

Built using TSMC’s cutting-edge 3nm process, it draws 300W of power.

The real magic happens at rack scale: operators can squeeze over 45,000 cores into a single liquid-cooled rack, delivering twice the performance of traditional x86 systems.

These platforms also typically provide direct market access and advanced analytics for professional users.

Cost Savings and Performance: $10 Billion Per Gigawatt and 2x Efficiency

At the heart of Meta and Arm’s collaboration sits a straightforward business calculation: building AI infrastructure that costs less to run while doing more work.

The AGI CPU delivers twice the performance of comparable x86 processors in identical rack setups, meaning data centers accomplish double the AI workload without expanding physical space.

Energy efficiency represents the architecture’s biggest advantage, with advanced 3nm manufacturing technology slashing power consumption compared to traditional server chips.

Meta estimates these improvements translate to $10 billion in savings per gigawatt of deployed capacity—enough money to fund thousands of research projects or, less excitingly, a truly spectacular number of server fans.

Combining multiple AI models in deployment can further increase overall system consistency and predictive ability, a hybrid approach that mirrors an AI orchestra and enhances operations.

Which Companies Are Deploying Arm AGI CPUs in Production?

Cost savings matter little if nobody actually uses the technology, which makes the deployment roster for Arm’s AGI CPU particularly remarkable.

Meta leads as the first production customer, integrating these processors into gigawatt-scale data centers alongside its custom MTIA accelerators. OpenAI joins as a launch partner for agentic AI workloads, while Cerebras, Cloudflare, and SAP commit to deployments across cloud and enterprise environments. SK Telecom already runs the chips in production for telecom AI.

Hardware makers including Supermicro, Lenovo, and ASRock Rack now accept orders, with broader availability arriving in late 2026. Many deployments emphasize risk management practices drawn from prop trading, where firms set strict guardrails and evaluation steps before scaling up.

Software Optimizations That Enable Edge-to-Cloud AI Performance

Between the data center and the smartphone in your pocket lies a performance gap that software optimizations must bridge. Model compression techniques like quantization shrink AI models to fit tiny devices—INT8 cuts size in half while INT4 quarters it.

Operator fusion merges steps like convolution and activation into single operations, boosting speed by 30-50% on edge processors. Hybrid strategies split workloads smartly: urgent tasks run locally in 1-10ms while complex analysis happens in the cloud.

Memory tricks like buffer reuse let models run on devices with less than 1MB RAM, making AI accessible everywhere from factories to wearables.